Chat with local LLMs using n8n and Ollama

Chat with local LLMs using n8n and OllamaThis n8n workflow allows you to seamlessly interact with your self-hosted Large Language Models (LLMs) through a...

Get This WorkflowAbout This Workflow

What This Workflow Does

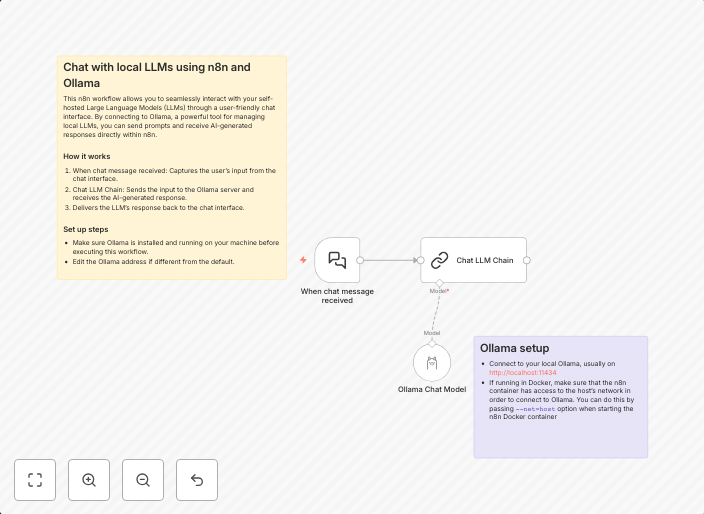

This n8n automation workflow enables seamless interaction with self-hosted Large Language Models (LLMs) through a chat interface, leveraging the capabilities of Ollama. It streamlines communication with your local LLMs, allowing you to utilize their AI capabilities with ease. The workflow simplifies the process of querying and receiving responses from your self-hosted LLMs.

Who Should Use This

This workflow is ideal for developers, AI researchers, and anyone interested in leveraging the power of local Large Language Models. It's particularly useful for those who have self-hosted LLMs and want to integrate them into their workflows or applications.

Key Features

- Seamless LLM interaction: The workflow allows you to easily query and receive responses from your self-hosted LLMs.

- No external API dependencies: By utilizing Ollama, the workflow eliminates the need for external API calls, making it a more secure and reliable option.

- Customizable chat interface: You can tailor the chat interface to fit your specific needs, making it easier to integrate with your applications.

- Native integration with n8n: The workflow is designed to work seamlessly with n8n, allowing for easy workflow management and automation.

How to Get Started

To get started with this workflow, import it into your n8n instance and customize it to fit your specific needs. You can then use the workflow to interact with your self-hosted LLMs through the chat interface, unlocking the full potential of your local AI capabilities.

Use This Workflow in n8n →Similar Workflows

Affiliate Disclosure: We may earn a commission if you sign up for n8n through our links. This doesn't affect our recommendations.