Evaluate AI agent response relevance using OpenAI and cosine similarity

This n8n template demonstrates how to calculate the evaluation metric "Relevance" which in this scenario, measures the relevance of the agent's response to...

Get This WorkflowAbout This Workflow

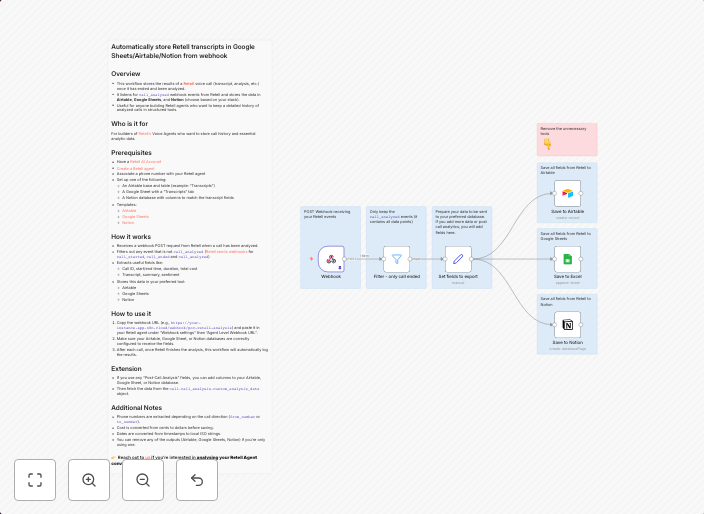

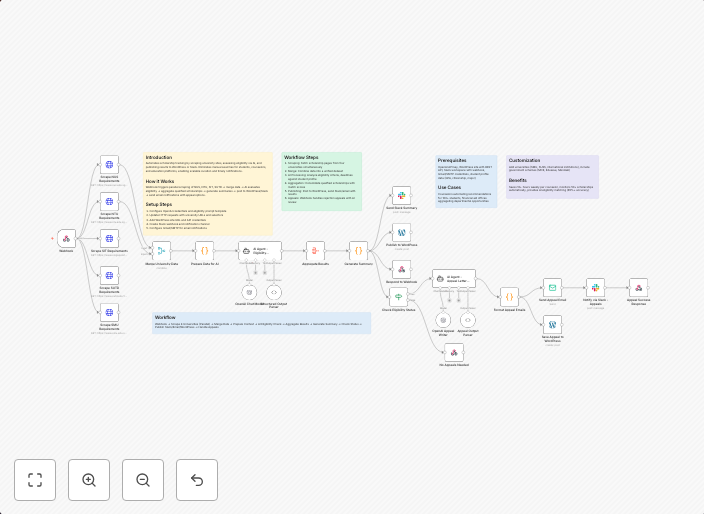

What This Workflow Does

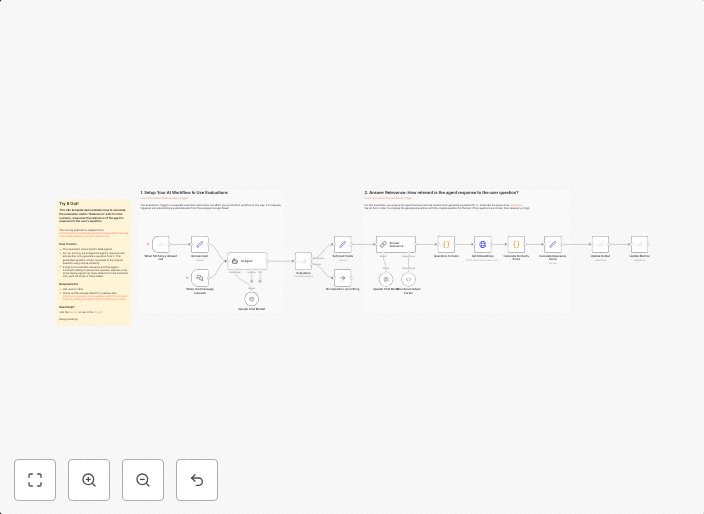

This n8n template evaluates the relevance of an AI agent's response to a given input using OpenAI and cosine similarity. The workflow calculates a relevance metric based on the similarity between the AI agent's response and the expected output. This allows developers to measure the quality of their AI agent's responses.

Who Should Use This

This workflow is ideal for developers and AI engineers working on chatbots, virtual assistants, or other conversational AI applications. It can help them improve the performance of their AI agents by evaluating the relevance of their responses.

Key Features

- Integrates with OpenAI to generate and analyze AI agent responses

- Calculates relevance metric using cosine similarity algorithm

- Allows for flexible evaluation of AI agent responses to different inputs

- Provides a measurable evaluation metric for AI agent performance

How to Get Started

To use this workflow, import it into your n8n instance and customize it by adjusting the OpenAI API settings and input parameters to match your specific use case. This will enable you to evaluate the relevance of your AI agent's responses and make data-driven decisions to improve its performance.

Use This Workflow in n8n →Similar Workflows

Affiliate Disclosure: We may earn a commission if you sign up for n8n through our links. This doesn't affect our recommendations.