Evaluate hybrid search for legal question-answering using Qdrant & BM25/mxbai

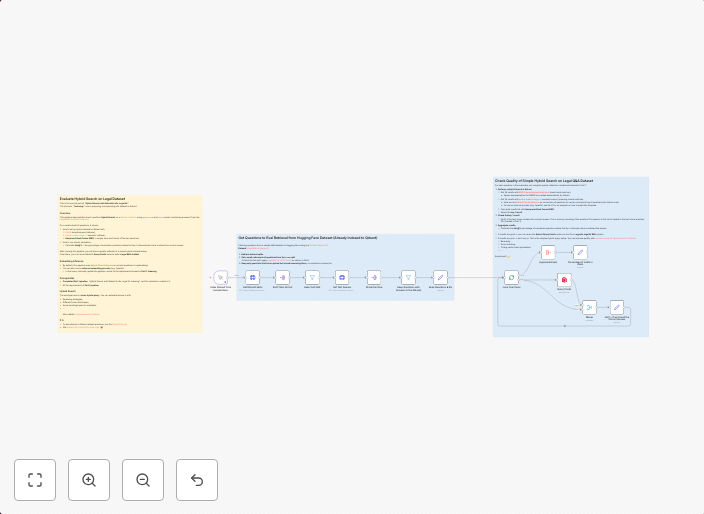

Evaluate Hybrid Search on Legal DatasetThis is the second part of *"Hybrid Search with Qdrant & n8n, Legal AI."**The first part, "Indexing", covers...

Get This WorkflowAbout This Workflow

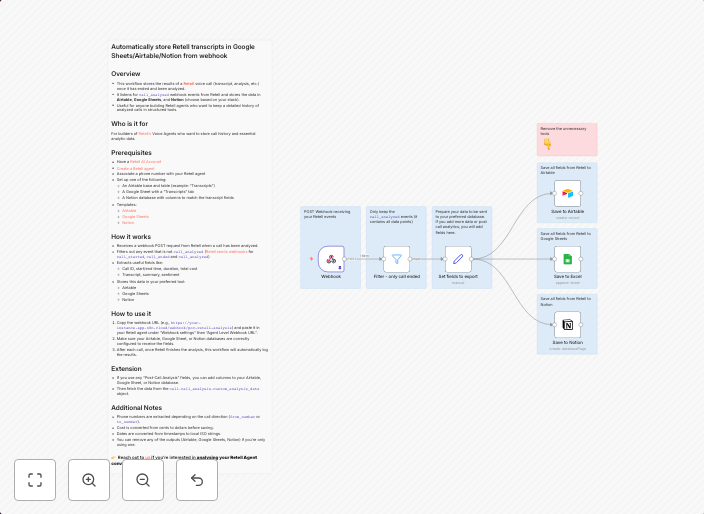

What This Workflow Does

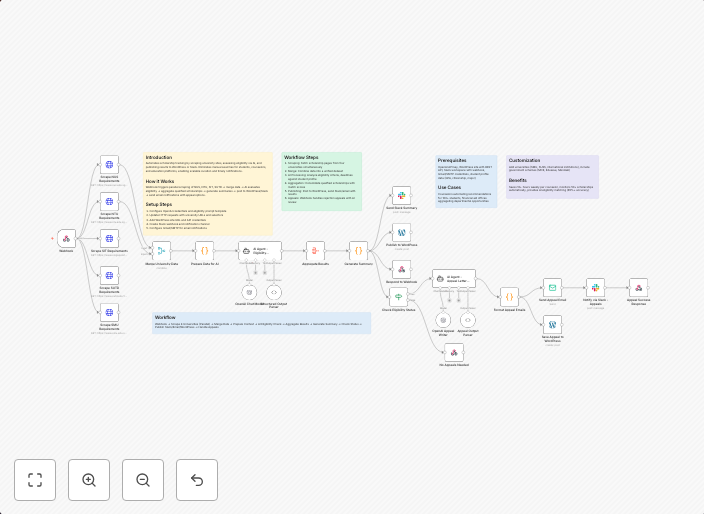

This workflow evaluates hybrid search for legal question-answering using Qdrant & BM25/mxbai. It's the second part of a comprehensive automation process called "Hybrid Search with Qdrant & n8n, Legal AI." The workflow assesses the performance of hybrid search on a legal dataset, providing insights into its effectiveness. This evaluation is crucial for optimizing search results and improving the accuracy of question-answering systems in legal contexts.

Who Should Use This

This workflow is designed for developers, data scientists, and AI researchers working on multimodal AI and AI RAG (Artificial Intelligence Research Group) projects. These professionals can leverage this automation to refine their hybrid search models and enhance the overall performance of legal question-answering systems.

Key Features

- Evaluates hybrid search performance on a legal dataset

- Utilizes Qdrant & BM25/mxbai for accurate search results

- Provides insights into the effectiveness of hybrid search for legal question-answering

- Supports multimodal AI and AI RAG projects

- Integrates with existing workflows for comprehensive automation

How to Get Started

To use this workflow, import it into your n8n environment and customize it according to your specific requirements. This may involve adjusting parameters, integrating additional integrations, or modifying the workflow logic to suit your project needs. By doing so, you can leverage the benefits of this automation to enhance your hybrid search models and improve the accuracy of legal question-answering systems.

Use This Workflow in n8n →Similar Workflows

Affiliate Disclosure: We may earn a commission if you sign up for n8n through our links. This doesn't affect our recommendations.