Detect hallucinations using specialised Ollama model bespoke-minicheck

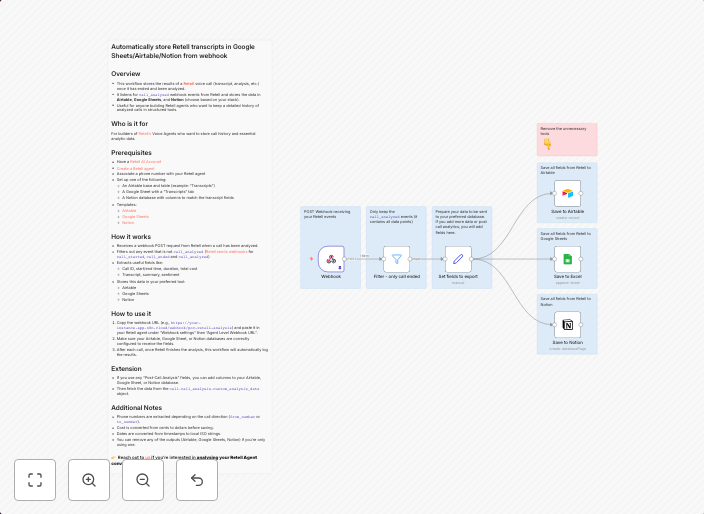

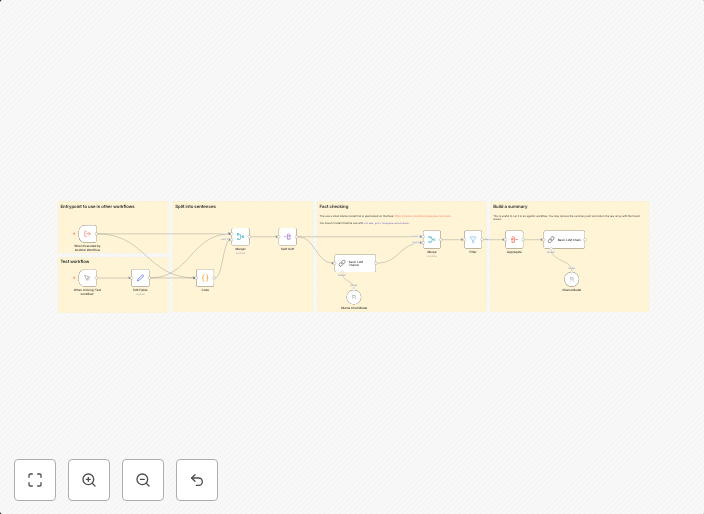

Fact-Checking Workflow Documentation OverviewThis workflow is designed for automated fact-checking of texts. It uses AI models to compare a given text with...

Get This WorkflowAbout This Workflow

What This Workflow Does

This workflow uses a bespoke Ollama model, a specialized AI model, to detect hallucinations in given texts. It compares the text with its original source to identify potential discrepancies or inaccuracies. The workflow aims to enhance the accuracy of information by fact-checking and verifying the authenticity of texts.

Who Should Use This

This workflow is ideal for developers, data analysts, or content creators who need to ensure the accuracy and reliability of information. It can also be useful for businesses or organizations that require robust fact-checking for publications, articles, or social media content.

Key Features

- Automated fact-checking: The workflow uses AI models to compare texts with their original sources to detect potential discrepancies.

- Hallucination detection: The bespoke Ollama model is designed to identify instances where AI-generated content contradicts the original information.

- Enhanced accuracy: By fact-checking and verifying text authenticity, the workflow helps improve the reliability of information and reduces the risk of misinformation.

How to Get Started

To use this workflow, simply import it into your n8n instance and customize the settings to suit your needs. You can then use it to fact-check and verify the accuracy of your texts, ensuring that the information you share is reliable and trustworthy.

Use This Workflow in n8n →Similar Workflows

Affiliate Disclosure: We may earn a commission if you sign up for n8n through our links. This doesn't affect our recommendations.